Latest recommendations

| Id | Title | Authors▲ | Abstract | Picture | Thematic fields | Recommender | Reviewers | Submission date | |

|---|---|---|---|---|---|---|---|---|---|

10 Jan 2024

An approximate likelihood method reveals ancient gene flow between human, chimpanzee and gorillaNicolas Galtier https://doi.org/10.1101/2023.07.06.547897Aphid: A Novel Statistical Method for Dissecting Gene Flow and Lineage Sorting in Phylogenetic ConflictRecommended by Alan Rogers based on reviews by Richard Durbin and 2 anonymous reviewers based on reviews by Richard Durbin and 2 anonymous reviewers

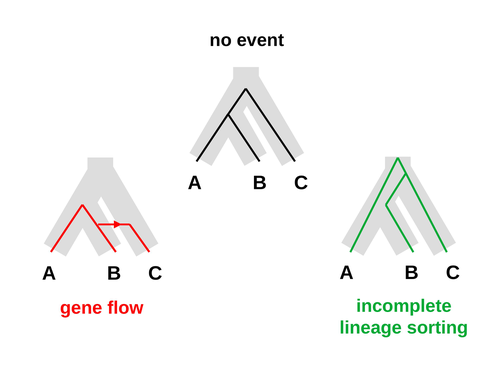

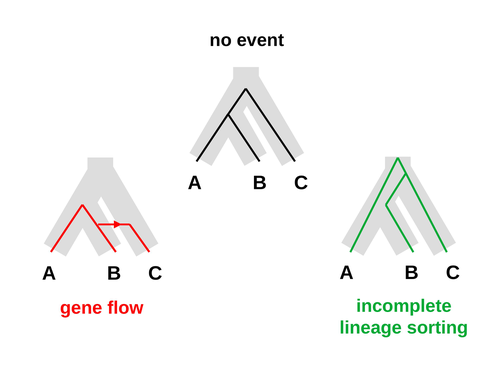

Galtier [1] introduces “Aphid,” a new statistical method that estimates the contributions of gene flow (GF) and incomplete lineage sorting (ILS) to phylogenetic conflict. Aphid is based on the observation that GF tends to make gene genealogies shorter, whereas ILS makes them longer. Rather than fitting the full likelihood, it models the distribution of gene genealogies as a mixture of several canonical gene genealogies in which coalescence times are set equal to their expectations under different models. This simplification makes Aphid far faster than competing methods. In addition, it deals gracefully with bidirectional gene flow—an impossibility under competing models. Because of these advantages, Aphid represents an important addition to the toolkit of evolutionary genetics. In the interest of speed, Aphid makes several simplifying assumptions. Yet even when these were violated, Aphid did well at estimating parameters from simulated data. It seems to be reasonably robust. Aphid studies phylogenetic conflict, which occurs when some loci imply one phylogenetic tree and other loci imply another. This happens when the interval between successive speciation events is fairly short. If this interval is too short, however, Aphid’s approximations break down, and its estimates are biased. Galtier suggests caution when the fraction of discordant phylogenetic trees exceeds 50–55%. Thus, Aphids will be most useful when the interval between speciation events is short, but not too short. Galtier applies the new method to three sets of primate data. In two of these data sets (baboons and African apes), Aphid detects gene flow that would likely be missed by competing methods. These competing methods are primarily sensitive to gene flow that is asymmetric in two senses: (1) greater flow in one direction than the other, and (2) unequal gene flow connecting an outgroup to two sister species. Aphid finds evidence of symmetric gene flow in the ancestry of baboons and also in that of African apes. The data suggest that ancestral humans and chimpanzees both interbred with ancestral gorillas, and at about the same rate. Aphid’s ability to detect this signature sets it apart from competing methods. References [1] Nicolas Galtier (2023) “An approximate likelihood method reveals ancient gene flow between human, chimpanzee and gorilla”. bioRxiv, ver. 3 peer-reviewed and recommended by Peer Community in Mathematical and Computational Biology. https://doi.org/10.1101/2023.07.06.547897 | An approximate likelihood method reveals ancient gene flow between human, chimpanzee and gorilla | Nicolas Galtier | <p>Gene flow and incomplete lineage sorting are two distinct sources of phylogenetic conflict, i.e., gene trees that differ in topology from each other and from the species tree. Distinguishing between the two processes is a key objective of curre... |  | Evolutionary Biology, Genetics and population Genetics, Genomics and Transcriptomics | Alan Rogers | 2023-07-06 18:41:16 | View | |

02 May 2023

Population genetics: coalescence rate and demographic parameters inferenceOlivier Mazet, Camille Noûs https://doi.org/10.48550/arXiv.2207.02111Estimates of Effective Population Size in Subdivided PopulationsRecommended by Alan Rogers based on reviews by 2 anonymous reviewers based on reviews by 2 anonymous reviewers

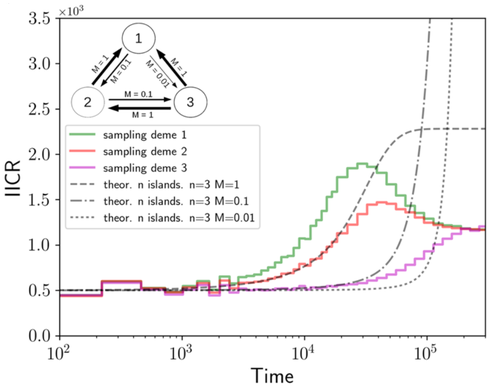

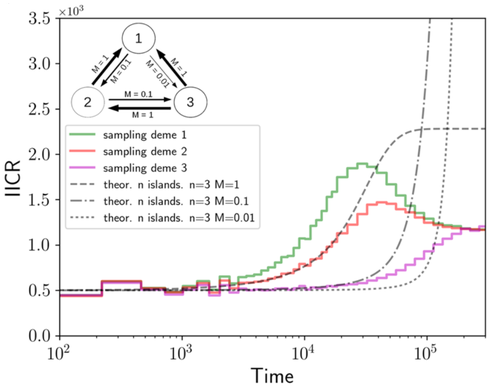

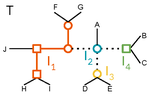

We often use genetic data from a single site, or even a single individual, to estimate the history of effective population size, Ne, over time scales in excess of a million years. Mazet and Noûs [2] emphasize that such estimates may not mean what they seem to mean. The ups and downs of Ne may reflect changes in gene flow or selection, rather than changes in census population size. In fact, gene flow may cause Ne to decline even if the rate of gene flow has remained constant. Consider for example the estimates of archaic population size in Fig. 1, which show an apparent decline in population size between roughly 700 kya and 300 kya. It is tempting to interpret this as evidence of a declining number of individuals, but that is not the only plausible interpretation. Each of these estimates is based on the genome of a single diploid individual. As we trace the ancestry of that individual backwards into the past, the ancestors are likely to remain in the same locale for at least a generation or two. Being neighbors, there’s a chance they will mate. This implies that in the recent past, the ancestors of a sampled individual lived in a population of small effective size. As we continue backwards into the past, there is more and more time for the ancestors to move around on the landscape. The farther back we go, the less likely they are to be neighbors, and the less likely they are to mate. In this more remote past, the ancestors of our sample lived in a population of larger effective size, even if neither the number of individuals nor the rate of gene flow has changed. For awhile then, Ne should increase as we move backwards into the past. This process does not continue forever, because eventually the ancestors will be randomly distributed across the population as a whole. We therefore expect Ne to increase towards an asymptote, which represents the effective size of the entire population. This simple story gets more complex if there is change in either the census size or the rate of gene flow. Mazet and Noûs [2] have shown that one can mimic real estimates of population history using models in which the rate of gene flow varies, but census size does not. This implies that the curves in Fig. 1 are ambiguous. The observed changes in Ne could reflect changes in census size, gene flow, or both. For this reason, Mazet and Noûs [2] would like to replace the term “effective population size” with an alternative, the “inverse instantaneous coalescent rate,” or IIRC. I don’t share this preference, because the same critique could be made of all definitions of Ne. For example, Wright [3, p. 108] showed in 1931 that Ne varies in response to the sex ratio, and this implies that changes in Ne need not involve any change in census size. This is also true when populations are geographically structured, as Mazet and Noûs [2] have emphasized, but this does not seem to require a new vocabulary.

Figure 1: PSMC estimates of the history of population size based on three archaic genomes: two Neanderthals and a Denisovan [1]. Mazet and Noûs [2] also show that estimates of Ne can vary in response to selection. It is not hard to see why such an effect might exist. In genomic regions affected by directional or purifying selection, heterozygosity is low, and common ancestors tend to be recent. Such regions may contribute to small estimates of recent Ne. In regions under balancing selection, heterozygosity is high, and common ancestors tend to be ancient. Such regions may contribute to large estimates of ancient Ne. The magnitude of this effect presumably depends on the fraction of the genome under selection and the rate of recombination. In summary, this article describes several processes that can affect estimates of the history of effective population size. This makes existing estimates ambiguous. For example, should we interpret Fig. 1 as evidence of a declining number of archaic individuals, or in terms of gene flow among archaic subpopulations? But these questions also present research opportunities. If the observed decline reflects gene flow, what does this imply about the geographic structure of archaic populations? Can we resolve the ambiguity by integrating samples from different locales, or using archaeological estimates of population density or interregional trade? REFERENCES [1] Fabrizio Mafessoni et al. “A high-coverage Neandertal genome from Chagyrskaya Cave”. Proceedings of the National Academy of Sciences, USA 117.26 (2020), pp. 15132–15136. https://doi.org/10.1073/pnas.2004944117. [2] Olivier Mazet and Camille Noûs. “Population genetics: coalescence rate and demographic parameters inference”. arXiv, ver. 2 peer-reviewed and recommended by Peer Community In Mathematical and Computational Biology (2023). https://doi.org/10.48550/ARXIV.2207.02111. [3] Sewall Wright. “Evolution in mendelian populations”. Genetics 16 (1931), pp. 97–159. https://doi.org/10.48550/ARXIV.2207.0211110.1093/genetics/16.2.97. | Population genetics: coalescence rate and demographic parameters inference | Olivier Mazet, Camille Noûs | <p style="text-align: justify;">We propose in this article a brief description of the work, over almost a decade, resulting from a collaboration between mathematicians and biologists from four different research laboratories, identifiable as the c... |  | Genetics and population Genetics, Probability and statistics | Alan Rogers | Joseph Lachance, Anonymous | 2022-07-11 14:03:04 | View |

13 Dec 2021

Within-host evolutionary dynamics of antimicrobial quantitative resistanceRamsès Djidjou-Demasse, Mircea T. Sofonea, Marc Choisy, Samuel Alizon https://hal.archives-ouvertes.fr/hal-03194023Modelling within-host evolutionary dynamics of antimicrobial resistanceRecommended by Krasimira Tsaneva based on reviews by 2 anonymous reviewersAntimicrobial resistance (AMR) arises due to two main reasons: pathogens are either intrinsically resistant to the antimicrobials, or they can develop new resistance mechanisms in a continuous fashion over time and space. The latter has been referred to as within-host evolution of antimicrobial resistance and studied in infectious disease settings such as Tuberculosis [1]. During antibiotic treatment for example within-host evolutionary AMR dynamics plays an important role [2] and presents significant challenges in terms of optimizing treatment dosage. The study by Djidjou-Demasse et al. [3] contributes to addressing such challenges by developing a modelling approach that utilizes integro-differential equations to mathematically capture continuity in the space of the bacterial resistance levels. Given its importance as a major public health concern with enormous societal consequences around the world, the evolution of drug resistance in the context of various pathogens has been extensively studied using population genetics approaches [4]. This problem has been also addressed using mathematical modelling approaches including Ordinary Differential Equations (ODE)-based [5. 6] and more recently Stochastic Differential Equations (SDE)-based models [7]. In [3] the authors propose a model of within-host AMR evolution in the absence and presence of drug treatment. The advantage of the proposed modelling approach is that it allows for AMR to be represented as a continuous quantitative trait, describing the level of resistance of the bacterial population termed quantitative AMR (qAMR) in [3]. Moreover, consistent with recent experimental evidence [2] integro-differential equations take into account both, the dynamics of the bacterial population density, referred to as “bottleneck size” in [2] as well as the evolution of its level of resistance due to drug-induced selection. The model proposed in [3] has been extensively and rigorously analysed to address various scenarios including the significance of host immune response in drug efficiency, treatment failure and preventive strategies. The drug treatment chosen to be investigated in this study, namely chemotherapy, has been characterised in terms of the level of evolved resistance by the bacterial population in presence of antimicrobial pressure at equilibrium. Furthermore, the minimal duration of drug administration on bacterial growth and the emergence of AMR has been probed in the model by changing the initial population size and average resistance levels. A potential limitation of the proposed model is the assumption that mutations occur frequently (i.e. during growth), which may not be necessarily the case in certain experimental and/or clinical situations. References [1] Castro RAD, Borrell S, Gagneux S (2021) The within-host evolution of antimicrobial resistance in Mycobacterium tuberculosis. FEMS Microbiology Reviews, 45, fuaa071. https://doi.org/10.1093/femsre/fuaa071 [2] Mahrt N, Tietze A, Künzel S, Franzenburg S, Barbosa C, Jansen G, Schulenburg H (2021) Bottleneck size and selection level reproducibly impact evolution of antibiotic resistance. Nature Ecology & Evolution, 5, 1233–1242. https://doi.org/10.1038/s41559-021-01511-2 [3] Djidjou-Demasse R, Sofonea MT, Choisy M, Alizon S (2021) Within-host evolutionary dynamics of antimicrobial quantitative resistance. HAL, hal-03194023, ver. 4 peer-reviewed and recommended by Peer Community in Mathematical and Computational Biology. https://hal.archives-ouvertes.fr/hal-03194023 [4] Wilson BA, Garud NR, Feder AF, Assaf ZJ, Pennings PS (2016) The population genetics of drug resistance evolution in natural populations of viral, bacterial and eukaryotic pathogens. Molecular Ecology, 25, 42–66. https://doi.org/10.1111/mec.13474 [5] Blanquart F, Lehtinen S, Lipsitch M, Fraser C (2018) The evolution of antibiotic resistance in a structured host population. Journal of The Royal Society Interface, 15, 20180040. https://doi.org/10.1098/rsif.2018.0040 [6] Jacopin E, Lehtinen S, Débarre F, Blanquart F (2020) Factors favouring the evolution of multidrug resistance in bacteria. Journal of The Royal Society Interface, 17, 20200105. https://doi.org/10.1098/rsif.2020.0105 [7] Igler C, Rolff J, Regoes R (2021) Multi-step vs. single-step resistance evolution under different drugs, pharmacokinetics, and treatment regimens (BS Cooper, PJ Wittkopp, Eds,). eLife, 10, e64116. https://doi.org/10.7554/eLife.64116 | Within-host evolutionary dynamics of antimicrobial quantitative resistance | Ramsès Djidjou-Demasse, Mircea T. Sofonea, Marc Choisy, Samuel Alizon | <p style="text-align: justify;">Antimicrobial efficacy is traditionally described by a single value, the minimal inhibitory concentration (MIC), which is the lowest concentration that prevents visible growth of the bacterial population. As a conse... |  | Dynamical systems, Epidemiology, Evolutionary Biology, Medical Sciences | Krasimira Tsaneva | 2021-04-16 16:55:19 | View | |

26 Feb 2024

A workflow for processing global datasets: application to intercroppingRémi Mahmoud, Pierre Casadebaig, Nadine Hilgert, Noémie Gaudio https://hal.science/hal-04145269Collecting, assembling and sharing data in crop sciencesRecommended by Eric Tannier based on reviews by Christine Dillmann and 2 anonymous reviewers based on reviews by Christine Dillmann and 2 anonymous reviewers

It is often the case that scientific knowledge exists but is scattered across numerous experimental studies. Because of this dispersion in different formats, it remains difficult to access, extract, reproduce, confirm or generalise. This is the case in crop science, where Mahmoud et al [1] propose to collect and assemble data from numerous field experiments on intercropping. It happens that the construction of the global dataset requires a lot of time, attention and a well thought-out method, inspired by the literature on data science [2] and adapted to the specificities of crop science. This activity also leads to new possibilities that were not available in individual datasets, such as the detection of full factorial designs using graph theory tools developed on top of the global dataset. The study by Mahmoud et al [1] has thus multiple dimensions:

I was particularly interested in the promotion of the FAIR principles, perhaps used a little too uncritically in my view, as an obvious solution to data sharing. On the one hand, I am admiring and grateful for the availability of these data, some of which have never been published, nor associated with published results. This approach is likely to unearth buried treasures. On the other hand, I can understand the reluctance of some data producers to commit to total, definitive sharing, facilitating automatic reading, without having thought about a certain reciprocity on the part of users and use by artificial intelligence. Reciprocity in terms of recognition, as is discussed by Mahmoud et al [1], but also in terms of contribution to the commons [5] or reading conditions for machine learning. References [1] Mahmoud R., Casadebaig P., Hilgert N., Gaudio N. A workflow for processing global datasets: application to intercropping. 2024. ⟨hal-04145269v2⟩ ver. 2 peer-reviewed and recommended by Peer Community in Mathematical and Computational Biology. https://hal.science/hal-04145269 [2] Wickham, H. 2014. Tidy data. Journal of Statistical Software 59(10) https://doi.org/10.18637/jss.v059.i10 [3] Gaudio, N., R. Mahmoud, L. Bedoussac, E. Justes, E.-P. Journet, et al. 2023. A global dataset gathering 37 field experiments involving cereal-legume intercrops and their corresponding sole crops. https://doi.org/10.5281/zenodo.8081577 [4] Mahmoud, R., Casadebaig, P., Hilgert, N. et al. Species choice and N fertilization influence yield gains through complementarity and selection effects in cereal-legume intercrops. Agron. Sustain. Dev. 42, 12 (2022). https://doi.org/10.1007/s13593-022-00754-y [5] Bernault, C. « Licences réciproques » et droit d'auteur : l'économie collaborative au service des biens communs ?. Mélanges en l'honneur de François Collart Dutilleul, Dalloz, pp.91-102, 2017, 978-2-247-17057-9. https://shs.hal.science/halshs-01562241 | A workflow for processing global datasets: application to intercropping | Rémi Mahmoud, Pierre Casadebaig, Nadine Hilgert, Noémie Gaudio | <p>Field experiments are a key source of data and knowledge in agricultural research. An emerging practice is to compile the measurements and results of these experiments (rather than the results of publications, as in meta-analysis) into global d... |  | Agricultural Science | Eric Tannier | 2023-06-29 15:38:28 | View | |

18 Sep 2023

Minimal encodings of canonical k-mers for general alphabets and even k-mer sizesRecommended by Paul Medvedev based on reviews by 2 anonymous reviewersAs part of many bioinformatics tools, one encodes a k-mer, which is a string, into an integer. The natural encoding uses a bijective function to map the k-mers onto the interval [0, s^k - ], where s is the alphabet size. This encoding is minimal, in the sense that the encoded integer ranges from 0 to the number of represented k-mers minus 1. However, often one is only interested in encoding canonical k-mers. One common definition is that a k-mer is canonical if it is lexicographically not larger than its reverse complement. In this case, only about half the k-mers from the universe of k-mers are canonical, and the natural encoding is no longer minimal. For the special case of a DNA alphabet and odd k, there exists a "parity-based" encoding for canonical k-mers which is minimal. In [1], the author presents a minimal encoding for canonical k-mers that works for general alphabets and both odd and even k. They also give an efficient bit-based representation for the DNA alphabet. This paper fills a theoretically interesting and often overlooked gap in how to encode k-mers as integers. It is not yet clear what practical applications this encoding will have, as the author readily acknowledges in the manuscript. Neither the author nor the reviewers are aware of any practical situations where the lack of a minimal encoding "leads to serious limitations." However, even in an applied field like bioinformatics, it would be short-sighted to only value theoretical work that has an immediate application; often, the application is several hops away and not apparent at the time of the original work. In fact, I would speculate that there may be significant benefits reaped if there was more theoretical attention paid to the fact that k-mers are often restricted to be canonical. Many papers in the field sweep under the rug the fact that k-mers are made canonical, leaving it as an implementation detail. This may indicate that the theory to describe and analyze this situation is underdeveloped. This paper makes a step forward to develop this theory, and I am hopeful that it may lead to substantial practical impact in the future. References [1] Roland Wittler (2023) "General encoding of canonical k-mers. bioRxiv, ver.2, peer-reviewed and recommended by Peer Community in Mathematical and Computational Biology https://doi.org/10.1101/2023.03.09.531845 | General encoding of canonical *k*-mers | Roland Wittler | <p style="text-align: justify;">To index or compare sequences efficiently, often <em>k</em>-mers, i.e., substrings of fixed length <em>k</em>, are used. For efficient indexing or storage, <em>k</em>-mers are encoded as integers, e.g., applying som... |  | Combinatorics, Computational complexity, Genomics and Transcriptomics | Paul Medvedev | Anonymous | 2023-03-13 17:01:37 | View |

24 Dec 2020

A linear time solution to the Labeled Robinson-Foulds Distance problemSamuel Briand, Christophe Dessimoz, Nadia El-Mabrouk and Yannis Nevers https://doi.org/10.1101/2020.09.14.293522Comparing reconciled gene trees in linear timeRecommended by Céline Scornavacca based on reviews by Barbara Holland, Gabriel Cardona, Jean-Baka Domelevo Entfellner and 1 anonymous reviewer based on reviews by Barbara Holland, Gabriel Cardona, Jean-Baka Domelevo Entfellner and 1 anonymous reviewer

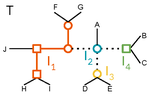

Unlike a species tree, a gene tree results not only from speciation events, but also from events acting at the gene level, such as duplications and losses of gene copies, and gene transfer events [1]. The reconciliation of phylogenetic trees consists in embedding a given gene tree into a known species tree and, doing so, determining the location of these gene-level events on the gene tree [2]. Reconciled gene trees can be seen as phylogenetic trees where internal node labels are used to discriminate between different gene-level events. Comparing them is of foremost importance in order to assess the performance of various reconciliation methods (e.g. [3]). References [1] Maddison, W. P. (1997). Gene trees in species trees. Systematic biology, 46(3), 523-536. doi: https://doi.org/10.1093/sysbio/46.3.523 | A linear time solution to the Labeled Robinson-Foulds Distance problem | Samuel Briand, Christophe Dessimoz, Nadia El-Mabrouk and Yannis Nevers | <p>Motivation Comparing trees is a basic task for many purposes, and especially in phylogeny where different tree reconstruction tools may lead to different trees, likely representing contradictory evolutionary information. While a large variety o... |  | Combinatorics, Design and analysis of algorithms, Evolutionary Biology | Céline Scornavacca | 2020-08-20 21:06:23 | View | |

19 Sep 2022

HMMploidy: inference of ploidy levels from short-read sequencing dataSamuele Soraggi, Johanna Rhodes, Isin Altinkaya, Oliver Tarrant, Francois Balloux, Matthew C Fisher, Matteo Fumagalli https://doi.org/10.1101/2021.06.29.450340Detecting variation in ploidy within and between genomesRecommended by Alan Rogers based on reviews by Barbara Holland, Benjamin Peter and Nicolas Galtier based on reviews by Barbara Holland, Benjamin Peter and Nicolas Galtier

Soraggi et al. [2] describe HMMploidy, a statistical method that takes DNA sequencing data as input and uses a hidden Markov model to estimate ploidy. The method allows ploidy to vary not only between individuals, but also between and even within chromosomes. This allows the method to detect aneuploidy and also chromosomal regions in which multiple paralogous loci have been mistakenly assembled on top of one another. HMMploidy estimates genotypes and ploidy simultaneously, with a separate estimate for each genome. The genome is divided into a series of non-overlapping windows (typically 100), and HMMploidy provides a separate estimate of ploidy within each window of each genome. The method is thus estimating a large number of parameters, and one might assume that this would reduce its accuracy. However, it benefits from large samples of genomes. Large samples increase the accuracy of internal allele frequency estimates, and this improves the accuracy of genotype and ploidy estimates. In large samples of low-coverage genomes, HMMploidy outperforms all other estimators. It does not require a reference genome of known ploidy. The power of the method increases with coverage and sample size but decreases with ploidy. Consequently, high coverage or large samples may be needed if ploidy is high. The method is slower than some alternative methods, but run time is not excessive. Run time increases with number of windows but isn't otherwise affected by genome size. It should be feasible even with large genomes, provided that the number of windows is not too large. The authors apply their method and several alternatives to isolates of a pathogenic yeast, Cryptococcus neoformans, obtained from HIV-infected patients. With these data, HMMploidy replicated previous findings of polyploidy and aneuploidy. There were several surprises. For example, HMMploidy estimates the same ploidy in two isolates taken on different days from a single patient, even though sequencing coverage was three times as high on the later day as on the earlier one. These findings were replicated in data that were down-sampled to mimic low coverage. Three alternative methods (ploidyNGS [1], nQuire, and nQuire.Den [3]) estimated the highest ploidy considered in all samples from each patient. The present authors suggest that these results are artifactual and reflect the wide variation in allele frequencies. Because of this variation, these methods seem to have preferred the model with the largest number of parameters. HMMploidy represents a new and potentially useful tool for studying variation in ploidy. It will be of most use in studying the genetics of asexual organisms and cancers, where aneuploidy imposes little or no penalty on reproduction. It should also be useful for detecting assembly errors in de novo genome sequences from non-model organisms. References [1] Augusto Corrêa dos Santos R, Goldman GH, Riaño-Pachón DM (2017) ploidyNGS: visually exploring ploidy with Next Generation Sequencing data. Bioinformatics, 33, 2575–2576. https://doi.org/10.1093/bioinformatics/btx204 [2] Soraggi S, Rhodes J, Altinkaya I, Tarrant O, Balloux F, Fisher MC, Fumagalli M (2022) HMMploidy: inference of ploidy levels from short-read sequencing data. bioRxiv, 2021.06.29.450340, ver. 6 peer-reviewed and recommended by Peer Community in Mathematical and Computational Biology. https://doi.org/10.1101/2021.06.29.450340 [3] Weiß CL, Pais M, Cano LM, Kamoun S, Burbano HA (2018) nQuire: a statistical framework for ploidy estimation using next generation sequencing. BMC Bioinformatics, 19, 122. https://doi.org/10.1186/s12859-018-2128-z | HMMploidy: inference of ploidy levels from short-read sequencing data | Samuele Soraggi, Johanna Rhodes, Isin Altinkaya, Oliver Tarrant, Francois Balloux, Matthew C Fisher, Matteo Fumagalli | <p>The inference of ploidy levels from genomic data is important to understand molecular mechanisms underpinning genome evolution. However, current methods based on allele frequency and sequencing depth variation do not have power to infer ploidy ... |  | Design and analysis of algorithms, Evolutionary Biology, Genetics and population Genetics, Probability and statistics | Alan Rogers | 2021-07-01 05:26:31 | View | |

18 Apr 2023

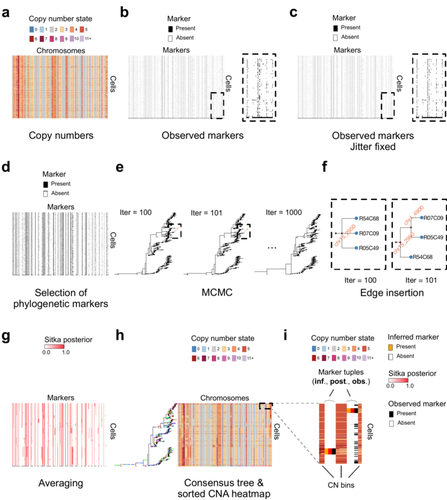

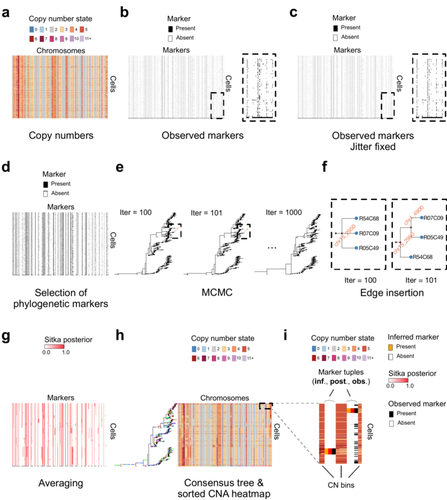

Cancer phylogenetic tree inference at scale from 1000s of single cell genomesSohrab Salehi, Fatemeh Dorri, Kevin Chern, Farhia Kabeer, Nicole Rusk, Tyler Funnell, Marc J Williams, Daniel Lai, Mirela Andronescu, Kieran R. Campbell, Andrew McPherson, Samuel Aparicio, Andrew Roth, Sohrab Shah, and Alexandre Bouchard-Côté https://doi.org/10.1101/2020.05.06.058180Phylogenetic reconstruction from copy number aberration in large scale, low-depth genome-wide single-cell data.Recommended by Amaury Lambert based on reviews by 3 anonymous reviewersThe paper [1] presents and applies a new Bayesian inference method of phylogenetic reconstruction for multiple sequence alignments in the case of low sequencing coverage but diverse copy number aberrations (CNA), with applications to single cell sequencing of tumors. The idea is to take advantage of CNA to reconstruct the topology of the phylogenetic tree of sequenced cells in a first step (the `sitka' method), and in a second step to assign single nucleotide variants (SNV) to tree edges (and then calibrate their lengths) (the `sitka-snv' method). The data are summarized into a binary-valued CxL matrix Y, where C is the number of cells and L is the number of loci (here, loci are segments of prescribed length called `bins'). The entry of Y at row i and column j is 1 (otherwise 0) iff in the ancestral lineage of cell i, at least one genomic rearrangement has occurred, and more specifically the gain or loss of a segment with at least one endpoint in locus j or in locus j+1. The authors expect the infinite-allele assumption to approximately hold (i.e., that at most one mutation occurs at any given marker and that 0 is the ancestral state). They refer to this assumption as the `perfect phylogeny assumption'. By only recording from CNA events the endpoints at which they occur, the authors lose the information on copy number, but they gain the assumption of independence of the mutational processes occurring at different sites, which approximately holds for CNA endpoints. The goal of sitka is to produce a posterior distribution on phylogenetic trees conditional on the matrix Y , where here a phylogenetic tree is understood as containing the information on 1) the topology of the tree but not its edge lengths, and 2) for each edge, the identity of markers having undergone a mutation, in the sense of the previous paragraph. The results of the method are tested against synthetic datasets simulated under various assumptions, including conditions violating the perfect phylogeny assumption and compared to results obtained under other baseline methods. The method is extended to assign SNV to edges of the tree inferred by sitka. It is also applied to real datasets of single cell genomes of tumors. The manuscript is very well-written, with a high degree of detail. The method is novel, scalable, fast and appears to perform favorably compared to other approaches. It has been applied in independent publications, for example to multi-year time-series single-cell whole-genome sequencing of tumors, in order to infer the fitness landscape and its dynamics through time, see [2]. The reviewing process has taken too long, mainly because of other commitments I had during the period and to the difficulty of finding reviewers. Let me apologize to the authors and thank them for their patience as well as for the scientific rigor they brought to their revisions and answers to reviewers, who I also warmly thank for their quality work. REFERENCES [1] Sohrab Salehi, Fatemeh Dorri, Kevin Chern, Farhia Kabeer, Nicole Rusk, Tyler Funnell, Marc J Williams, Daniel Lai, Mirela Andronescu, Kieran R. Campbell, Andrew McPherson, Samuel Aparicio, Andrew Roth, Sohrab Shah, and Alexandre Bouchard-Côté. Cancer phylogenetic tree inference at scale from 1000s of single cell genomes (2023). bioRxiv, 2020.05.06.058180, ver. 4 peer-reviewed and recommended by Peer Community in Mathematical and Computational Biology. [2] Sohrab Salehi, Farhia Kabeer, Nicholas Ceglia, Mirela Andronescu, Marc J. Williams, Kieran R. Campbell, Tehmina Masud, Beixi Wang, Justina Biele, Jazmine Brimhall, David Gee, Hakwoo Lee, Jerome Ting, Allen W. Zhang, Hoa Tran, Ciara O’Flanagan, Fatemeh Dorri, Nicole Rusk, Teresa Ruiz de Algara, So Ra Lee, Brian Yu Chieh Cheng, Peter Eirew, Takako Kono, Jenifer Pham, Diljot Grewal, Daniel Lai, Richard Moore, Andrew J. Mungall, Marco A. Marra, IMAXT Consortium, Andrew McPherson, Alexandre Bouchard-Côté, Samuel Aparicio & Sohrab P. Shah. Clonal fitness inferred from time-series modelling of single-cell cancer genomes (2021). Nature 595, 585–590. https://doi.org/10.1038/s41586-021-03648-3 | Cancer phylogenetic tree inference at scale from 1000s of single cell genomes | Sohrab Salehi, Fatemeh Dorri, Kevin Chern, Farhia Kabeer, Nicole Rusk, Tyler Funnell, Marc J Williams, Daniel Lai, Mirela Andronescu, Kieran R. Campbell, Andrew McPherson, Samuel Aparicio, Andrew Roth, Sohrab Shah, and Alexandre Bouchard-Côté | <p style="text-align: justify;">A new generation of scalable single cell whole genome sequencing (scWGS) methods allows unprecedented high resolution measurement of the evolutionary dynamics of cancer cell populations. Phylogenetic reconstruction ... |  | Evolutionary Biology, Genetics and population Genetics, Genomics and Transcriptomics, Machine learning, Probability and statistics | Amaury Lambert | 2021-12-10 17:08:04 | View | |

27 Jul 2021

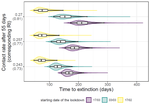

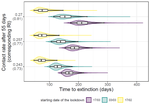

Estimating dates of origin and end of COVID-19 epidemicsThomas Bénéteau, Baptiste Elie, Mircea T. Sofonea, Samuel Alizon https://doi.org/10.1101/2021.01.19.21250080The importance of model assumptions in estimating the dynamics of the COVID-19 epidemicRecommended by Valery Forbes based on reviews by Bastien Boussau and 1 anonymous reviewerIn “Estimating dates of origin and end of COVID-19 epidemics”, Bénéteau et al. develop and apply a mathematical modeling approach to estimate the date of the origin of the SARS-CoV-2 epidemic in France. They also assess how long strict control measures need to last to ensure that the prevalence of the virus remains below key public health thresholds. This problem is challenging because the numbers of infected individuals in both tails of the epidemic are low, which can lead to errors when deterministic models are used. To achieve their goals, the authors developed a discrete stochastic model. The model is non-Markovian, meaning that individual infection histories influence the dynamics. The model also accounts for heterogeneity in the timing between infection and transmission and includes stochasticity as well as consideration of superspreader events. By comparing the outputs of their model with several alternative models, Bénéteau et al. were able to assess the importance of stochasticity, individual heterogeneity, and non-Markovian effects on the estimates of the dates of origin and end of the epidemic, using France as a test case. Some limitations of the study, which the authors acknowledge, are that the time from infection to death remains largely unknown, a lack of data on the heterogeneity of transmission among individuals, and the assumption that only a single infected individual caused the epidemic. Despite the acknowledged limitations of the work, the results suggest that cases may be detected long before the detection of an epidemic wave. Also, the approach may be helpful for informing public health decisions such as the necessary duration of strict lockdowns and for assessing the risks of epidemic rebound as restrictions are lifted. In particular, the authors found that estimates of the end of the epidemic following lockdowns are more sensitive to the assumptions of the models used than estimates of its beginning. In summary, this model adds to a valuable suite of tools to support decision-making in response to disease epidemics. References Bénéteau T, Elie B, Sofonea MT, Alizon S (2021) Estimating dates of origin and end of COVID-19 epidemics. medRxiv, 2021.01.19.21250080, ver. 3 peer-reviewed and recommended by Peer Community in Mathematical and Computational Biology. https://doi.org/10.1101/2021.01.19.21250080 | Estimating dates of origin and end of COVID-19 epidemics | Thomas Bénéteau, Baptiste Elie, Mircea T. Sofonea, Samuel Alizon | <p style="text-align: justify;">Estimating the date at which an epidemic started in a country and the date at which it can end depending on interventions intensity are important to guide public health responses. Both are potentially shaped by simi... |  | Epidemiology, Probability and statistics, Stochastic dynamics | Valery Forbes | 2021-02-23 16:37:32 | View |

MANAGING BOARD

Caroline Colijn

Christophe Dessimoz

Barbara Holland

Hirohisa Kishino

Anita Layton

Wolfram Liebermeister

Christian Robert

Celine Scornavacca

Donate Weghorn